Amnesty International warns that TikTok’s recommendations push children towards harmful mental health

In a press release and two reports (Driven into Darkness and “I feel Exposed”), Amnesty International strongly criticizes the recommender system of TikTok. I have not read the reports completely, but they each are around 60 pages long and contain serious allegations.

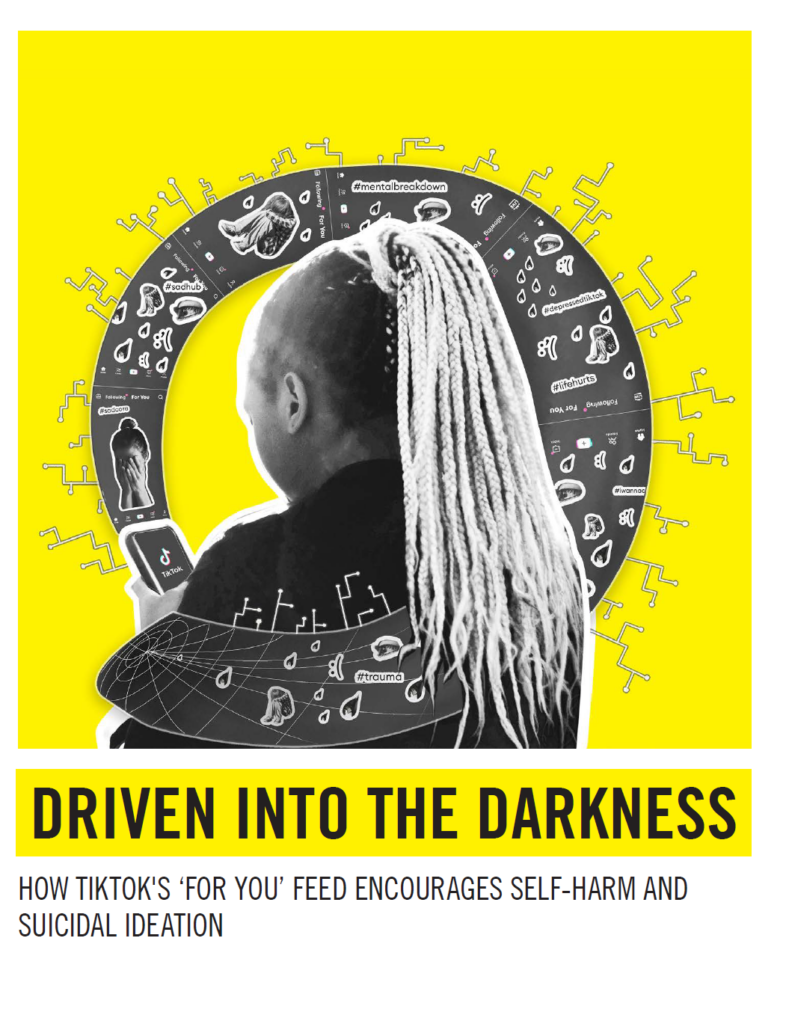

In summary, Amnesty International claims that their research has revealed concerning findings about TikTok’s impact on young users, especially regarding mental health. They claim that TikTok’s “For You” feed quickly leads users, particularly teenagers, towards content related to mental health struggles. Within a few hours, nearly half of the videos presented to users interested in mental health were potentially harmful, and this effect was more pronounced in manual tests. These videos often romanticize or normalize serious issues like depression and self-harm.

Further, TikTok’s design and business model are under scrutiny. The platform’s algorithms, which contributed to its rapid rise, are designed to keep users engaged for extended periods. This can be particularly harmful for young people with existing mental health challenges. The ‘For You’ feed, a central feature of TikTok, personalizes content to user interests, often leading them to a cycle of increasingly negative or harmful content. This addictive design has been criticized for exacerbating mental health issues like anxiety, depression, and self-harm among young users.

Lastly, TikTok’s data collection practices are also a major concern. The platform collects extensive user data to keep users engaged and generate more tailored content. This practice not only feeds into the cycle of harmful content but also raises questions about user privacy and the ethical use of data. Amnesty International’s research suggests that TikTok needs to make significant changes to protect its users, especially the younger ones, from these negative impacts.