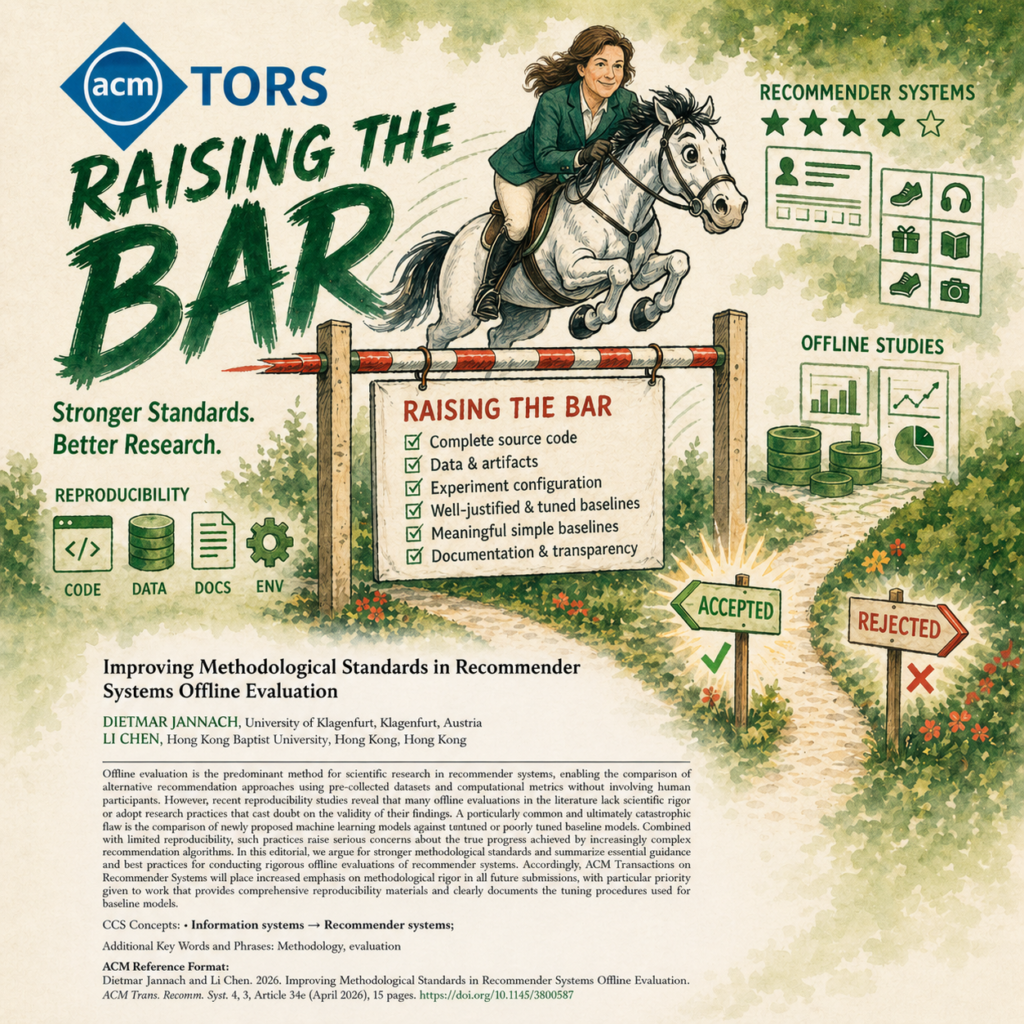

ACM TORS Raises the Bar: The New Policy for Better Reproducibility in Offline Studies

ACM Transactions on Recommender Systems (ACM TORS) is placing increased emphasis on methodological rigor in submissions that rely on offline evaluation. The goal is to further strengthen the quality, reliability, and interpretability of research published in the journal, particularly for algorithmic papers that compare new recommendation models against existing baselines via offline evaluations.

The change is described in the editorial “Improving Methodological Standards in Recommender Systems Offline Evaluation” by the Editors-in-Chief Dietmar Jannach and Li Chen. The article addresses a concern that has been discussed in the recommender-systems community for many years: many published offline evaluations are difficult to reproduce, difficult to compare, or insufficiently clear about whether reported improvements over baselines reflect genuine progress.

Offline evaluation remains one of the most widely used methods in recommender-systems research. It allows researchers to compare algorithms using existing datasets and computational metrics, without the cost and complexity of user studies or A/B tests. This makes it highly valuable. At the same time, offline evaluation is sensitive to many design choices: dataset preprocessing, train-test splits, metric implementation, baseline selection, hyperparameter tuning, random seeds, and software environments can all influence the final results.

The central issue, therefore, is not whether offline evaluation is useful. It clearly is. The issue is whether offline evaluations are documented and implemented in a way that allows reviewers and readers to assess the validity of the claims.

What Is Changing?

The editorial highlights a set of central quality criteria for papers that use offline evaluation. These criteria concern two closely related areas: reproducibility and fair comparison. They are intended to help authors, reviewers, and readers better assess whether reported findings are robust, transparent, and meaningful.

First, algorithmic papers submitted to ACM TORS should generally be accompanied by the source code needed to reproduce the full experimental pipeline. This includes not only the proposed model, but also code for baselines, preprocessing and postprocessing, training and testing, hyperparameter tuning, statistical analysis, documentation, and execution instructions. Where possible, relevant data artifacts should also be made available, including preprocessed datasets, train/validation/test splits, experimental results, and trained models.

Second, authors should document the configuration of their experiments as clearly as possible. This includes the hyperparameter search spaces, tuning strategies, search budgets or search times, optimal hyperparameters per dataset and model, random seeds, software dependencies, and hardware used. These details matter because small implementation or configuration choices can meaningfully affect reported performance.

Third, baseline selection and tuning should be described clearly. Authors should explain why the selected baselines are appropriate for the research question. Where suitable, submissions should include both strong recent baselines and meaningful simple baselines, such as popularity-based recommenders, kNN methods, random baselines, matrix factorization, or linear models. Just as importantly, baselines should be systematically tuned with comparable care to the proposed method.

This last point is particularly important. A new model can look impressive when compared against weak or poorly tuned baselines. Conversely, several studies have shown that simpler, older methods can remain competitive when they are carefully tuned and evaluated under comparable conditions. The goal is not to discourage new models, but to make sure that claims of improvement are supported by fair evidence.

Why This Matters

A recurring problem in recommender-systems research is that many papers report improvements over the state of the art, but the underlying comparisons are often hard to verify. Different papers may use different preprocessing strategies, data splits, metrics, baseline implementations, and tuning procedures. As a result, it can be difficult to determine whether a reported improvement reflects a better algorithm or simply a different experimental setup.

The editorial highlights several issues observed in the literature: weak or inappropriate baselines, insufficient baseline tuning, flawed evaluation practices, incomplete third-party implementations, and limited reproducibility materials. In many cases, authors share the code for their proposed model, but not the full pipeline required to reproduce the actual comparative claim.

This distinction is important. For many algorithmic papers, the main claim is not simply “we built a model.” The main claim is “our model performs better than existing alternatives.” To verify that claim, readers need access to the full evaluation process, including the baselines and the way they were tuned.

In other words: sharing the model code is good; sharing the experiment is better.

The Relation to the Broader Best-Practice Checklist

The criteria emphasized in the editorial are closely related to a broader set of best-practice recommendations proposed by Beel, Jannach, Said, Shani, Vente, and Wegmeth in “Best-Practices for Offline Evaluations of Recommender Systems,” published as part of the Dagstuhl Seminar report Evaluation Perspectives of Recommender Systems: Driving Research and Education.

That broader checklist covers a wide range of issues, including research questions and hypotheses, dataset choice, preprocessing, data splitting, metrics, hyperparameter optimization, statistical testing, sensitivity analysis, code availability, data availability, and execution environments. It is intended as a comprehensive tool for improving the quality and transparency of offline evaluations.

ACM TORS encourages authors to use and submit the full checklist where appropriate. At the same time, the editorial places particular emphasis on a smaller set of central issues, especially the availability of reproducibility materials and the clear documentation of baseline tuning.

This is a practical distinction. The full checklist offers a broad framework for rigorous offline evaluation, while the editorial highlights those aspects that are especially important for assessing empirical claims based on offline experiments.

Valid Exceptions Are Possible

ACM TORS also recognizes that not every artifact can always be shared. There may be legitimate restrictions related to proprietary data, organizational policies, privacy, licensing, or third-party code. In some cases, it may also not be necessary to retune a baseline if the optimal configuration has already been established under an identical experimental setup.

The important point is that such limitations should be stated clearly. Authors should explain what cannot be shared, why it cannot be shared, and how this affects the reproducibility and interpretation of the results. This allows editors, reviewers, and readers to make an informed assessment.

The expectation is therefore not one of impossible uniformity. Rather, it is a call for transparency, completeness where feasible, and clear justification where completeness is not feasible.

A Step Toward More Reliable Progress

This increased emphasis by ACM TORS is a step toward making published results easier to verify, compare, and build upon. It may require more work from authors, especially in documenting code, tuning baselines, preserving data splits, and reporting configuration details. It may also encourage smaller but more carefully designed experiments.

In many cases, this may be a worthwhile trade-off.

The purpose is not to slow down research for its own sake. The purpose is to support research that is easier to trust. When authors provide complete experimental pipelines, justify their baselines, and document tuning procedures, reviewers can assess the work more effectively, readers can better understand the results, and future researchers can build on the findings with greater confidence.

For ACM TORS, the message is constructive: strong recommender-systems research is not only about proposing interesting methods. It is also about providing the evidence, artifacts, and documentation that help the community understand, verify, and build on those methods.